York University offers WordPress websites to faculty members and departments who want to use a web publishing system in a managed environment. WordPress is an easy-to-use content management system that is suitable for websites and blogs (which allow readers to comment on posts.) Our system supports all of the features of WordPress including:

- UIT-managed WordPress installation with a controlled list of WordPress plugins and themes

- Passport-York authentication for blog administrators

- An integrated user database allows users to have access to multiple blogs

- UIT support to help you customize a York-branded blog theme

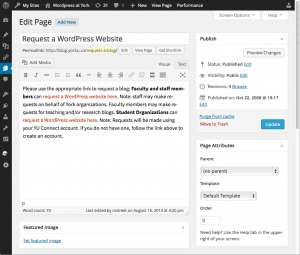

- Simple version control allows you to view or restore earlier versions of posts and pages.

- Generation of RSS and ATOM feeds which can "push" WordPress headlines into other websites.

Notes about website's 'Look & Feel':

You will not be able to modify WordPress code or upload your own plugins or themes. This service is primarily for York-branded sites. If you require a specific theme or plugin, please see the Plugin Request page.

Managing users

Site administrators can invite anyone with a York Passport York login to join their site as a contributor, writer, editor or administrator. By default WordPress sites can be viewed by anyone, but if required, you can restrict access to York faculty, staff and students. More information can be found at the WordPress at York - Managing Users website section.